In 2017, Motherboard journalist, Samantha Cole, was first to report on deepfakes in her article ‘AI-Assisted Fake Porn Is Here and We’re All Fucked’. Cole reported on a Reddit user called ‘deepfakes’ who had, in his words, ‘found a clever way to do face-swap’ using a ‘a machine learning algorithm, using easily accessible materials and open-source code that anyone with a working knowledge of deep learning algorithms could put together.’ Cole reported that Reddit user ‘deepfakes’ had created and posted on Reddit, fake pornographic videos of Gal Gadot, Scarlett Johansson, and Taylor Swift, among other celebrity women. Thus, marking the beginnings of what has turned into a serious, growing, and global problem – with women being disproportionately affected by this technology.

Data & Society Affiliates, Britt Paris and Joan Donovan, describe deepfakes as ‘a video that has been altered through some form of machine learning to “hybridize or generate human bodies and faces”‘. While Reddit has since banned deepfakes on its platform, prohibiting the ‘dissemination of images or video depicting any person in a state of nudity or engaged in any act of sexual conduct apparently created or posted without their permission, including depictions that have been faked’, Cole’s reporting sparked broader discussions about the implications of this technology, and what it means for distinguishing what is real or fake, and what it means for individuals who might be targeted by fake pornographic depictions of them (technology-facilitated abuse/image-based sexual abuse).

Since 2017, viral deepfakes have been created of Barack Obama, Tom Cruise, and the Queen, depicting them doing and saying things they did not say or do.

Law professor and deepfake scholar, Danielle Citron, and Robert Chesney from the University of Texas School of Law, wrote about the potential harmful implications of deepfakes for individuals and society. Citron and Chesney wrote that for individuals:

‘[t]here will be no shortage of harmful exploitations. Some will be in the nature of theft, such as stealing people’s identities to extract financial or some other benefit. Others will be in the nature of abuse, commandeering a person’s identity to harm them or individuals who care about them. And some will involve both dimensions, whether the person creating the fake so intended or not.’

Citron and Chesney also discussed the implications of deepfakes on distrorting democractic discourse, eroding trust, manipulating elections, and national security implications, among other things. Citron said in her TED talk entitled ‘How deepfakes undermine truth and threaten democracy’ that ‘technologists expect that with advancements in AI, soon it may be difficult if not impossible to tell the difference between a real video and a fake one.’

While deepfakes pose a threat to society and democracy, in a 2019 report entitled ‘The State of Deepfakes: Landscape, Threats, and Impact’ by Deeptrace (now Sensity), it was found that 96% of deepfakes are pornographic, and 100% of those pornographic deepfakes are of women. Perhaps unsurprisingly, given the origin story of ‘deepfakes’ and the way deepfakes were first used to create fake, non-consensual pornographic material, the human and gendered implications of deepfakes remain the most significant threat of this technology.

The incorrect labelling of deepfakes

There is a lot of discussion by deepfake experts and technologists about what actually constitutes a ‘deepfake’ and whether other forms of less advanced media manipulation would be considered deepfakes, such as ‘cheap fakes’, which require ‘less expertise and fewer technical resources‘, whereas deepfakes require ‘more expertise and technical resources‘. Cheap fakes is a term coined by Paris and Donovan as:

‘an AV manipulation created with cheaper, more accessible software (or, none at all). Cheap fakes can be rendered through Photoshop, lookalikes, re-contextualizing footage, speeding, or slowing.’

To experts, the term ‘deepfake’ is being used incorrectly and loosely to refer to any kind of manipulated/synthetic media even though the material in question is not technically a deepfake. Moreover, according to deepfake experts, media outlets have incorrectly labelled content as a deepfake when it is not.

The incorrect labelling of content as deepfakes, or indeed the incorrect labelling of less advanced manipulated material as deepfakes, has raised concerns with experts that it muddies the waters, and compromises our ability to accurately assess the threat of deepfakes. For example, Mikael Thalen, writer at the Daily Dot, reported on a case in the US about a ‘mother of a high school cheerleader‘ who was ‘accused of manipulating images and video in an effort to make it appear as if her daughter’s rivals were drinking, smoking, and posing nude.’ In Thalen’s article, it was reported that:

‘The Bucks County District Attorney’s Office, which charged [the mother] with three counts of cyber harassment of a child and three counts of harassment, referenced the term deepfake when discussing the images as well as a video that depicted one of the alleged victim’s vaping.’

According to Thalen’s reporting, ‘[e]xperts are raising doubts that artificial intelligence was used to create a video that police are calling a “deepfake” and is at the center of an ongoing legal battle playing out in Pennsylvania’. World-leading experts on deepfakes, Henry Adjer and Nina Schick, in relation to this situation, pointed out in a tweet by Schick that ‘the footage that was released by ABC wasn’t a #deepfake, and it was irresponsible of the media to report it as such. But really that’s the whole point. #Deepfake blur lines between what‘s real & fake, until we can’t recognise the former.’

My experience with deepfakes and cheap fakes

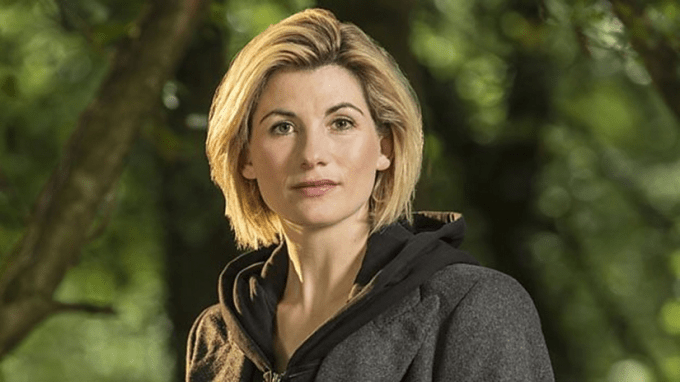

At the age of 18, I discovered anonymous sexual predators had been creating and sharing fake, doctored pornographic images of me and had been targeting me well before my discovery (since I was 17 years old). Over time, the perpetrators continued to create and share this fake content, which continued to proliferate on the internet, and became more and more graphic over time. The abuse I was experiencing, coupled with a number of other factors, including that there were no specific laws to deal with this issue at the time, eventually led me to speak out publicly about my experiences of altered intimate imagery in 2015/16 and help fight for law reform in Australia. After a few years, distributing altered intimate images and videos became criminalised across Australia (thanks to the collective efforts of academics, survivors, policy makers and law makers). However, during and after I had spoken out, and during and after the law reform changes across Australia, the perpetrators kept escalating their abuse. In 2018, I received an email that there was a ‘deepfake video of [me] on some porn sites’, I was later sent a link to an 11-second video depicting me engaged in sexual intercourse, and shortly after I discovered another fake video depicting me performing oral sex (the 11-second video has been verified by a deepfake expert to be a deepfake, and the fake video depicting me performing oral sex appears to be, according to a deepfake expert, a cheap fake or manual media manipulation. The altered and doctored images of me are not deepfakes).

Issues with terminology and the incorrect labelling of deepfakes

While I agree with deepfake experts and technologists that incorrectly labelling content as a deepfake is problematic, there are a number of issues I have with this terminology discussion and the incorrect labelling of deepfakes, which I set out below.

Before I discuss them, I should point out that deepfakes and cheap fakes have, and can be, created for a number of different purposes, for a number of different reasons, not simply as a way to carry out technology-facilitated abuse. However, given what we know about deepfakes, and how it is primarily used to create non-consensual pornographic content of women, these terms are inextricably linked to its impact on women, and therefore ought to be considered from that lens.

I should also point out that I am neither an academic, deepfake expert, nor a technologist, but I do have a vested interest in this issue given my own personal experiences, and I do believe that I have just as much of a right to include my perspectives on these issues, as these conversations should be as diverse as possible in the marketplace of ideas, and should not be limited in who can talk about them when it directly and disproportionately affects many women in society.

First, the term ‘deepfake’ itself is problematic, due to its highly problematic origin being used as a way to commit non-consensual technology-facilitated abuse. Why should we give credence to this particular term that has been proven to be weaponised primarily against women, and in so doing, immortalise the ‘legacy’ of a Reddit user whose actions have brought to the fore another way for other perpetrators to cause enormous amounts of harm to women across the world. I take issue with labelling AI-facilitated manipulated media as ‘deepfakes’, even though it’s the term the world knows of it by. There ought to be a broader discussion of changing our language and terminology of this technology because it does not adequately capture the primary way it has, and continues to be, used by perpetrators to carry about abuse against women.

Second, the term ‘cheap fake’ is also problematic to the extent that it might refer to material that has been manipulated – in less advanced ways than a deepfake – to create fake, intimate content of a person. Using the term ‘cheap fake’ to describe fake manipulated pornographic content of a person is potentially harmful as it undermines the harm that a potential victim could be experiencing. The word ‘cheap’ connotes something that is less valuable and less important, and that is particularly harmful in the context of people who might be a target of technology-facilitated abuse.

Third, I would argue that from a potential victim’s perspective, it is irrelevant that there even is a distinction between deepfakes and cheap fakes because a potential victim might still experience the same harm, regardless of the technology used to make the content.

Fourth, as AI is advancing, the capacity for laypeople (and even experts) to distinguish what is real or fake is likely going to be affected, including laypeople’s capacity to determine what is a deepfake or a cheap fake. While there is significant validity to experts casting doubt on the incorrect labelling of deepfakes, I worry that as deepfake technology advances, so too will a kind of ‘asymmetry of knowledge’ emerge between technologists and experts, who will be best placed to determine what is real or fake, and laypeople who may not be able to do so. This kind of ‘asymmetry of knowledge’ ought to be a primary consideration when casting doubt about deepfake claims, especially for those who might innocently claim something is a deepfake, whether that be a victim of technology-facilitated abuse or indeed, media organisations who report on it. Noting that innocently claiming something to be a deepfake can be distinguished from those who might intentionally claim something to be a deepfake when it isn’t, or vice versa – in which case, it would be important for experts to cast doubt on such claims.

In conclusion, there ought to be a broader discussion of our use of the terms ‘deepfakes’ and ‘cheap fakes’, given its origin and primary use case. There is also validity to experts casting doubt on claims of deepfakes, however, there are also important factors that ought to be considered when doing so, including its relevance, and whether claims were made innocently or not.

The newest morphed image is me photoshopped me onto the cover of porn film, ‘Buttman’s Big Tit Adventure Starring Noelle Martin and 38G monsters’ it says.

The newest morphed image is me photoshopped me onto the cover of porn film, ‘Buttman’s Big Tit Adventure Starring Noelle Martin and 38G monsters’ it says.